GNN-Based Retriever for Knowledge-Grounded LLMs

Extended SubgraphRAG with a 2-layer GraphSAGE encoder for neighborhood-aware triple retrieval. Improved answer recall and Llama-3.1 reasoning on WebQSP.

Read paper →Pipeline

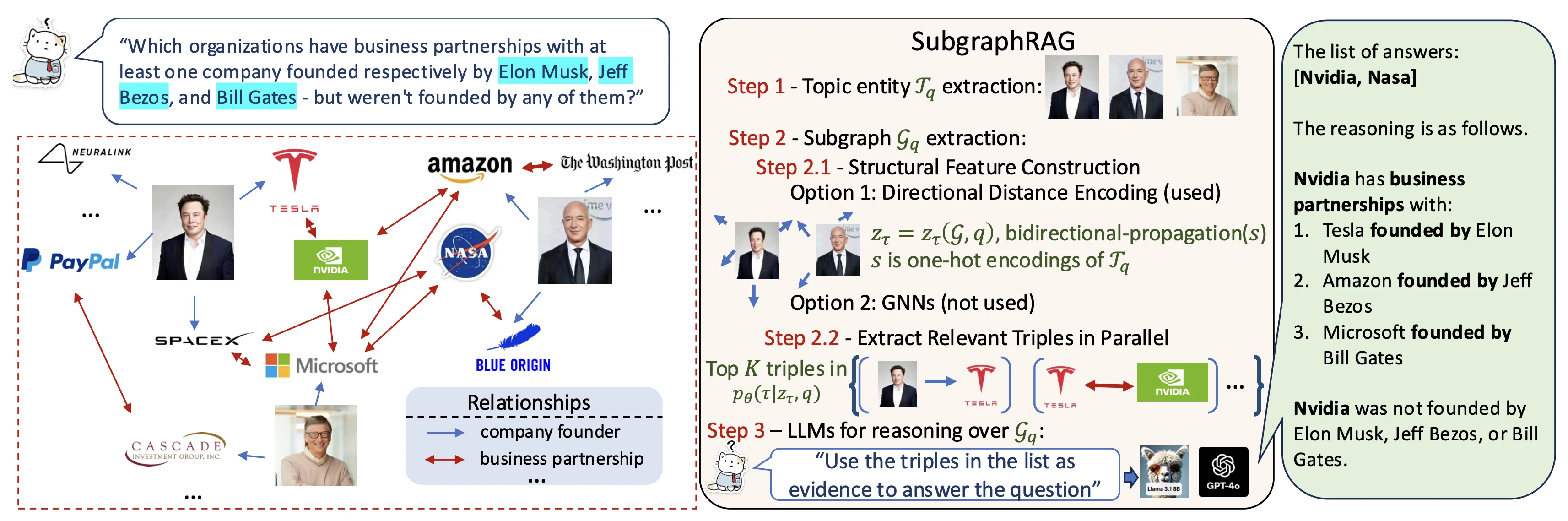

Knowledge-graph RAG answers a question by retrieving a compact set of KG triples and handing them to an LLM reasoner. The SubgraphRAG baseline does this in four stages: extract topic entities from the question, pull a local subgraph around them, score candidate triples against the question, and feed the top- to the LLM. The baseline’s scorer is a simple MLP over distance-encoded features — it rates each triple independently, ignoring the local graph structure the subgraph itself provides.

I replaced the MLP with a 2-layer GraphSAGE encoder so scoring uses neighborhood context. The authors originally proposed a GNN in this slot but reported no gain and shipped the MLP; I revisited that choice with different training dynamics and depth.

On WebQSP, answer recall and GPT-labeled triple recall at improved (ans_recall , GPT triple recall ) with comparable retrieval latency. Downstream Llama-3.1-8B reasoning improved too (Hit , Macro ).